racism, sexism, homophobia, hate speech, etc.). This includes not only comments directed at users of /r/math, but at any person or group of people (e.g. If you upload an image or video, you must explain why it is relevant by posting a comment providing additional information that prompts discussion.ĭo not troll, insult, antagonize, or otherwise harass. Memes and similar content are not permitted. Image/Video posts should be on-topic and should promote discussion. If you are asking for advice on choosing classes or career prospects, please post in the stickied Career & Education Questions thread. Rule 4: No career or education related questions If you ask for help cheating, you will be banned. Do not ask or answer this type of question in /r/math. Homework problems, practice problems, and similar questions should be directed to /r/learnmath, /r/homeworkhelp or /r/cheatatmathhomework. This includes reference requests - also see our list of free online resources and recommended books. If you're asking for help learning/understanding something mathematical, post in the Quick Questions thread or /r/learnmath. Requests for calculation or estimation of real-world problems and values are best suited for the Quick Questions thread, /r/askmath or /r/theydidthemath. For example, if you think your question can be answered quickly, you should instead post it in the Quick Questions thread. Questions on /r/math should spark discussion. Rule 2: Questions should spark discussion Please avoid derailing such discussions into general political discussion, and report any comments that do so. In particular, any political discussion on /r/math should be directly related to mathematics - all threads and comments should be about concrete events and how they affect mathematics. For a continuous random variable, differential entropy is analogous to entropy.All posts and comments should be directly related to mathematics, including topics related to the practice, profession and community of mathematics.

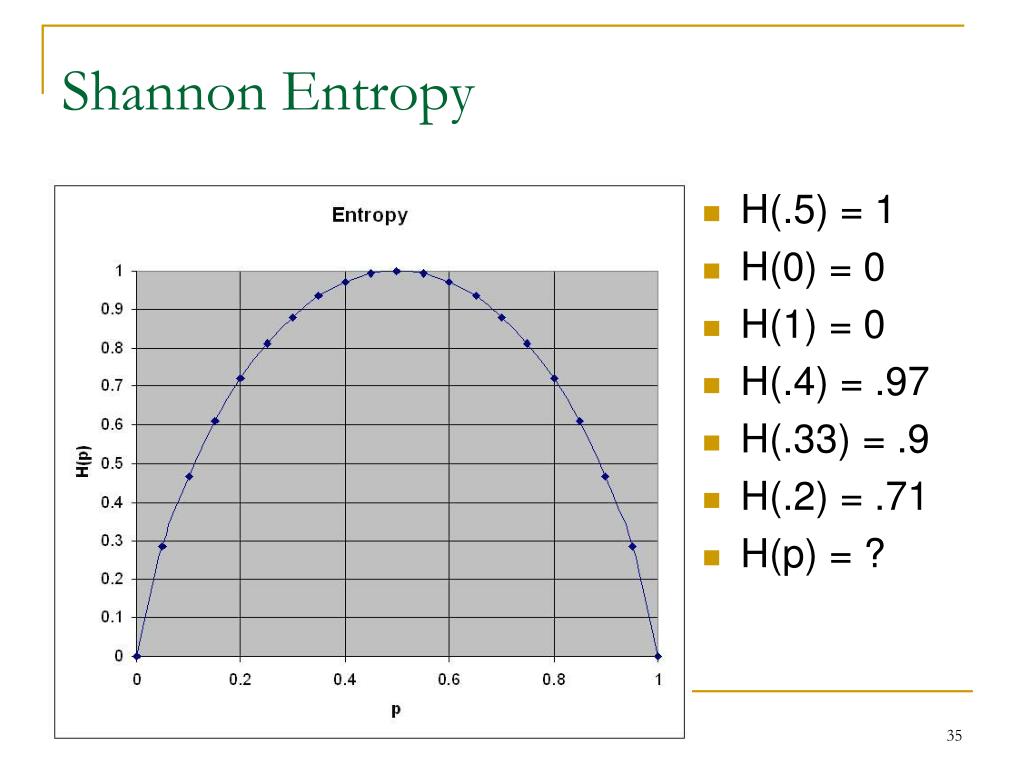

The definition can be derived from a set of axioms establishing that entropy should be a measure of how informative the average outcome of a variable is. Entropy has relevance to other areas of mathematics such as combinatorics and machine learning.

The analogy results when the values of the random variable designate energies of microstates, so Gibbs formula for the entropy is formally identical to Shannon's formula. Shannon strengthened this result considerably for noisy channels in his noisy-channel coding theorem.Įntropy in information theory is directly analogous to the entropy in statistical thermodynamics. Shannon considered various ways to encode, compress, and transmit messages from a data source, and proved in his famous source coding theorem that the entropy represents an absolute mathematical limit on how well data from the source can be losslessly compressed onto a perfectly noiseless channel. The "fundamental problem of communication" – as expressed by Shannon – is for the receiver to be able to identify what data was generated by the source, based on the signal it receives through the channel. Shannon's theory defines a data communication system composed of three elements: a source of data, a communication channel, and a receiver. The concept of information entropy was introduced by Claude Shannon in his 1948 paper " A Mathematical Theory of Communication", and is also referred to as Shannon entropy. Generally, information entropy is the average amount of information conveyed by an event, when considering all possible outcomes. Two bits of entropy: In the case of two fair coin tosses, the information entropy in bits is the base-2 logarithm of the number of possible outcomes with two coins there are four possible outcomes, and two bits of entropy. An equivalent definition of entropy is the expected value of the self-information of a variable. Base 2 gives the unit of bits (or " shannons"), while base e gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". Where Σ, the logarithm, varies for different applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed